KEncode: Packing Data for Strict Limits

Sometimes 80 characters of URL is all you get, and JSON won't survive the trip. kencode squeezes structured state through it, with the schema written as a plain Kotlin data class.

Over the past few years, I found myself occasionally writing the same boilerplate: manually packing bits of application

state into tight, heavily character-limited strings. It ended up with me creating a library for it called kencode. But

first it’s story time… and then a little explanation of the underlying tech of why kotlinx.serialization is so cool

and THEN I’ll go over kencode.

It all started with URL callback links on an integrated Search Engine Results Page (SERP). In a previous project at Theca, we had built a search engine embedded directly into a client’s website. When users clicked a search result, the link first redirected to our servers so we could register telemetry for the click before finally sending them to the actual target page.

This is standard tracking infrastructure stuff. But if enough state can be encoded directly into the URL, the tracking server can bypass an expensive database lookup entirely. In this particular case, we needed to pass the query ID, the user ID, the document ID, and the exact position in the SERP (the redirection target itself is appended as well, but does not benefit from compression). One database call is not much, but latency does matter for initial impressions.

Having a short URL here is nice, they look more professional, and there is a limit to how long URLs can be (browser

specific). We also want there to be no special characters in the encoded result. That includes hyphens and underscore,

since that would otherwise break the word selecting logic. Try to select the entire path by double-clicking in this URL

and you’ll see: https://example.com/hyphen-path. But here it works just fine to select dQw…:

https://www.youtube.com/watch?v=dQw4w9WgXcQ since it’s a single word.

Anyway…

Then the same encoding problem happened again with Kubernetes pod names. I was dynamically spinning up short-lived jobs

and wanted to embed trace IDs somehow. Naturally, this metadata should also be stored in Kubernetes labels so it remains

queryable with kubectl. But since you need a unique name for a pod regardless you might as well use something

more informative than the default random suffix, so I put it in the name.

Besides, relying on labels to pass execution state creates tons of error-prone boilerplate. To read that state back, you typically have to fetch the labels by name and manually parse strings, something like:

val clientId = pod.labels["clientId"]?.toIntOrNull()

?: throw Exception("Missing clientId")

val batchId = pod.labels["batchId"]?.toIntOrNull()

?: throw Exception("Missing batchId")

val retryCount = pod.labels["retryCount"]?.toIntOrNull() ?: 0

val isPriority = pod.labels["isPriority"]?.toBooleanStrictOrNull() ?: falseKubernetes also imposes a strict 63-character limit on names and only allows alphanumeric characters and hyphens. Encoding efficiency becomes a limiting factor here.

Later, I ran into this encoding problem a third time while implementing stateless pagination links for that SERP. We had

built a complex hybrid search system merging traditional keyword matching with semantic vector search. Paginating

correctly through these blended results meant we had to carry internal ranking state from page to page. This state lived

entirely inside a ?next=xxx query parameter, meaning the payload had to be compact, URL-safe, and opaque to the user.

And now, I find myself needing it a fourth time for my current project Eignex. It’s an optimization engine for

doing structured optimization in production to automatically tune things like model parameters or ranking weights. Think

of it like an advanced multi-variate A/B test. It requires tracking chosen values for the optimization problems until

we have a result, at which point we update the optimization algorithm. By potentially passing that state in a token to

the front-end and back we can avoid storing it in a massive dict of user ID to settings on the back-end.

I realize this is not an everyday problem, but I have now encountered it four separate times. I think the ability to pack complex state into a tiny string is a useful architectural trick. Doing it manually each time is error-prone.

This is where kencode shines. You define a data class and get strong typing directly from the decoded payload:

@Serializable

data class JobState(

val clientId: Int,

val batchId: Int,

val retryCount: Int?,

val isPriority: Boolean

)

val state = JobState(119, 210, null, true)

val encodedState = EncodedFormat.encodeToString(state)

// This encodes the object into the string:

// 03W8mJ

val decodedState = EncodedFormat.decodeFromString<JobState>(encodedState)For comparison, the same object in other encodings:

| Encoding | Length | Output |

|---|---|---|

| JSON | 66 chars | {"clientId":119,"batchId":210,"retryCount":null,"isPriority":true} |

| Protobuf + Base64 | 10 chars | CHcQ0gEgAQ |

| kencode (Base62) | 6 chars | 03W8mJ |

kencode is implemented as a custom format on top of the kotlinx.serialization library, which has quite a different

approach to serialization compared to other JVM libraries. Why that is the case requires some context.

Why kotlinx.serialization?

Before libraries like modern Jackson became the standard, serializing Java objects usually involved writing manual boilerplate. If you need to support multiple formats like Protobuf in addition to JSON you will suffer. Manually crafting custom serializers for every single combination of data type and output format (the classic NxM problem) is simply not the way.

To reduce this boilerplate, runtime reflection libraries like Gson and Jackson became popular. Under the hood, when an object is serialized, these libraries inspect the class at runtime to find its fields, their types, and their values. They map these fields to sequential tokens on the fly. This makes standard JSON-focused libraries easy to use, but not necessarily easy to extend.

The sequential model of serializing makes it difficult to create formats that perform aggregate operations on the entire class. kencode relies on exactly this kind of optimization to compact the payload, like grouping all boolean fields and nullability flags into a single bitmask header.

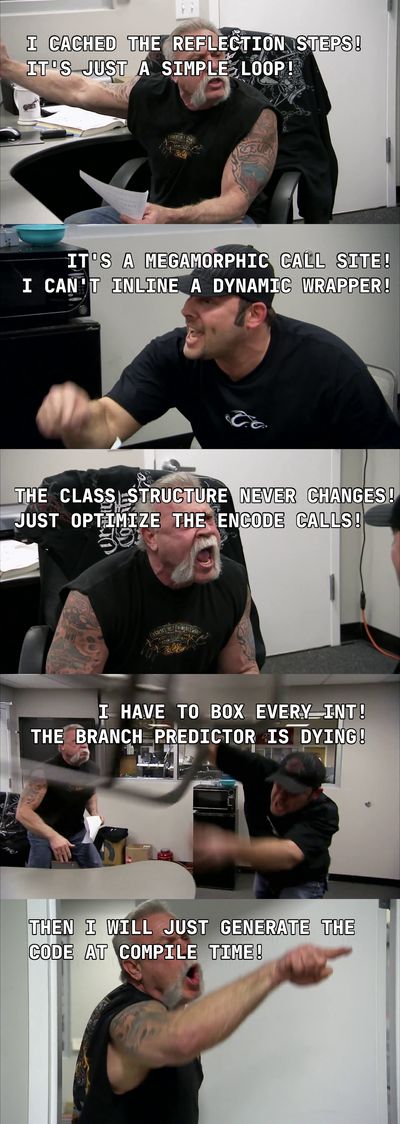

There is also a hard performance ceiling on the reflection, and here is some sage advice:

never

ignore

the

cost

of

serialization.

Reflection libraries do usually cache the reflection steps, but the issue is not the reflection itself.

It’s that interpreting these cached steps at runtime is inherently slower than executing statically compiled code.

When a reflection library loops over the fields of your class, it essentially calls a method like

serializer.write(fieldValue) over and over. Since your fields are all different types, that is a

megamorphic call site which the compiler can’t inline or optimize well.

This is why kotlinx.serialization takes another approach completely. Instead of relying on reflection at runtime, it generates static serializers at compile time. The approach is similar to Rust’s serde framework [1], allowing for highly optimized serialization without resorting to manual boilerplate.

“This all sounds good but where is the evidence?” It’s probably what I would think at this point. Well, there is actually a recent study comparing kotlinx.serialization to Gson and Jackson (full disclosure: the journal it’s published in is a bit dubious, but the actual benchmark methodology looks good). They found that the static compiled approach outperforms Gson and Jackson in most cases in both CPU and memory. kotlinx.serialization was especially good with small payloads with many repetitions. For very large payloads, Jackson was slightly faster. These results are also backed up by this benchmark for CPU only.

In kotlinx.serialization, when a Kotlin data class is annotated with @Serializable, a compiler plugin hooks directly into the build process. It inspects the exact “shape” of the data class and synthetically generates a custom KSerializer implementation for it. Because this happens at compile time, there are no expensive runtime reflection loops or type-guessing. The generated code is strictly typed. This makes JIT happy, which is why kotlinx.serialization is good in high-repetition benchmarks.

The plugin handles what you’d expect from a serialization library:

- Primitives: Mapped directly to basic, unboxed encoder instructions.

- Generics: The generated serializers simply accept child serializers as constructor arguments, so something like a

Response<T>knows exactly how to serialize its generic payload. - Polymorphism: annotating a sealed class automatically generates a serializer that injects a class discriminator

(like a

"@type": "MyClass"string) so the decoder knows which specific subclass to instantiate later.

The generated serializer for JobState (from above) will look like this:

// Generated automatically by the @Serializable compiler plugin

object JobStateSerializer : KSerializer<JobState> {

override val descriptor: SerialDescriptor =

buildClassSerialDescriptor("JobState") {

element<Int>("clientId")

element<Int>("batchId")

element<Int?>("retryCount")

element<Boolean>("isPriority")

}

override fun serialize(encoder: Encoder, value: JobState) {

val composite = encoder.beginStructure(descriptor)

composite.encodeIntElement(descriptor, 0, value.clientId)

composite.encodeIntElement(descriptor, 1, value.batchId)

composite.encodeNullableSerializableElement(

descriptor, 2, Int.serializer(), value.retryCount

)

composite.encodeBooleanElement(descriptor, 3, value.isPriority)

composite.endStructure(descriptor)

}

override fun deserialize(decoder: Decoder): JobState {

// This method is analogous to serialize and a bit longer, due to

// formats with arbitrary ordering like JSON.

}

}The benefit here is that there are no generic loops and the call sites are strictly typed (monomorphic), which is a massive speed advantage!

If you’re more curious about the details of how the code generation works I really recommend this post. For example, what about which constructor to call (and how) in deserialize?

Notice how serialize just calls methods on an Encoder. The KSerializer provides the data shape while the Encoder writes it. This separation is why it’s so convenient to do custom formats in kotlinx.serialization.

So to wrap up so far, kotlinx.serialization has three layers:

- Format (StringFormat or BinaryFormat): The entrypoint of the library, like

Json.encodeToString()orProtoBuf.encodeToByteArray(). This is also where you configure and create the underlying encoder/decoders. - Encoder and Decoder: The actual format implementation. They map the shape from the serializer into the logical structure of the output format.

- Serializer: Generated at compile time for classes annotated with @Serializable or manually constructed.

How It Works

Let’s dive into kencode.

I ended up splitting it into three separate pieces: a compact binary format, a general byte-to-text encoder, and a small composition layer that turns the whole thing into a normal string format. The binary format and text encoders can be used separately.

1. PackedFormat

PackedFormat is the biggest part of the library. It contains the logic to serialize Kotlin objects into small byte

arrays.

The format assumes both sides already agree on the schema. This is quite a strong assumption and definitely not what you want for persistence or cross-language communication. But when the assumption holds, we can save a lot of space not encoding structural information that both sides already know.

Its other core optimizations are:

-

Bitmask headers: boolean fields and nullability markers are packed into a compact bitset header, costing 1 bit per field instead of the usual 1 byte.

-

Merged nested headers: bitmask bits from nested class fields are collected into a single root-level header, eliminating the per-class byte-alignment padding that would otherwise be wasted at each nesting boundary.

-

Variable-length integers: Standard integer fields waste space because they always consume 4 or 8 bytes, even for small numbers. We shrink them using varint (LEB128) and ZigZag encodings[2].

-

Varint works by using the most significant bit (MSB) of each byte as a “continuation flag.” If the bit is

1, more bytes follow; if0, it’s the final byte. This allows small positive numbers to squeeze into a single byte. It’s kind of like how UTF-8 works if you’re familiar with it. -

ZigZag fixes a critical flaw in varints: negative numbers. In standard two’s complement binary, a

-1is full of1s, meaning it would take the maximum number of bytes to encode! ZigZag maps small negative numbers to small positive numbers (0 → 0, -1 → 1, 1 → 2, -2 → 3, etc.). This strips away the leading ones, keeping the bit-length short so varint can pack them tightly.

Varint is the default encoding in kencode (and in protobuf). You can switch encoding on a field with annotations or by configuring the format defaults. Enum ordinals are always varint-encoded automatically.

-

-

Collection bitmaps: boolean lists and nullable element lists pack their flags into a leading bitmap rather than storing one byte per element.

Together these optimizations explain how the JobState example was compacted. The boolean and nullability flag is

combined in the header, and the ID integers take one and two bytes respectively.

The header for a flat class is straightforward: one bit per boolean field, one bit per nullable field

(0 = null, 1 = present), packed into a bitset with the smallest number of bytes. For JobState, that is two

bits total, which is just a single byte. The field data follows immediately after.

Nesting complicates this. If JobState had a nested class, say a JobConfig with its own boolean

fields, the naïve approach would write a separate header for each class in the tree. But class

boundaries force byte alignment: even two bits require a full byte, so you waste bits at every boundary.

Instead, we merge all bits from the root and every non-nullable nested class into

a single shared header at the very front. A nested class that contributes five bits just reserves

exactly five positions in that shared header. No byte boundaries are crossed until all the bits

are written together at the root.

Collections work differently due to their dynamic size. The element count comes first as a varint and then each value is

packed after. There is a minor optimization for List<Boolean>: it’s compressed into a bitset instead. This is probably

not very commonly used, but the same logic applies to nullable lists which is more useful. So e.g. List<Int?> works like

this: a bitmap records which positions are null, and the non-null values follow packed end to end.

The header-first layout requires you to write data you haven’t processed yet. Standard stream-based frameworks aren’t built for this; to force them to do it, you would have to load the entire object into an intermediate representation in memory before writing anything out.

Because kencode knows the exact schema via the SerialDescriptor, it avoids the intermediate tree entirely.

beginStructure just allocates a localized byte array and reserves the exact number of bits for the header. As it walks

the fields, booleans and nulls update a bitmask while the raw encoded values go straight into the array. Then

endStructure writes the bitmask and flushes the array right after it. You get the correct two-phase layout with minor

memory overhead.

PackedFormat is the layer that actually reduces the payload. Everything after this is really about transport.

2. The Text Layer: ASCII-Safe Codecs

Transporting byte data as text is a common operation and usually handled by Base64 encoding it and moving on. In

kencode, we have more ByteEncoding options (the interface for translating raw bytes to a string and back).

Base64 and Base64Url are there mostly for interoperability, and they’re also a bit faster than the base-N codecs since the encoding is just a simple bit-shuffle. Base85 is useful when density matters more than a conservative character set. The most interesting one is really Base62 (which is also the default choice). It solves the problem of using non-alphanumeric characters while staying reasonably dense.

BaseRadix handles arbitrary alphabets generically. It basically works like this: you treat the entire array of

bytes as one massive number, divide it by your base (like 62), and map the remainders to characters in your

alphabet. It is the exact same underlying math as converting binary to standard decimals, just using a custom

string of letters and digits. So any alphabet works. Base36 uses only lowercase, for example, and you could also

plug in the base-58 alphabet Bitcoin uses to avoid visually ambiguous characters like 0/O and I/l.

But there is a catch when implementing this. To do that base conversion math, you have to load those raw bytes into a

BigInteger. As your payload gets larger, BigInteger division becomes slower, so the naïve version is O(n^2).

The encoder uses a trick here by chunking the input in pre-defined sizes; this is a const parameter in the algorithm.

Instead of processing the whole payload as one giant number, it slices the data into fixed chunks and converts each

block individually. This reverts the solution to O(n) just like Base64. You do lose a tiny fraction of a byte to

rounding overhead every time a new block starts.

The block size itself is a tradeoff. Smaller blocks mean more rounding overhead at each boundary, larger blocks mean

more BigInteger work per block. 32 bytes turned out to be a good sweet spot. The same code also handles “massive”

alphabets (anything larger than 256 characters, where one input byte maps to less than one output character).

UnicodeRangeAlphabet exposes this: it takes a contiguous slice of the Unicode BMP, up to ~55k code points, and

gives you about one character per two bytes at the cost of a much noisier output. The encode and decode paths are

slightly different because leading zero bytes have to be preserved explicitly, but the underlying base-N arithmetic

is the same.

The chunking also needs an inverse mapping for the decoder. For a given block, encoding N bytes produces a fixed

number of characters M, but because M = ceil(N * 8 / log2(base)) rounds up, multiple byte counts can land on the

same character count. So we precompute a lookup that goes the other way (character count back to byte count) so

decoding a partial trailing block doesn’t have to guess the original length.

The asymptotic cost per input byte falls out of the alphabet size:

| Codec | chars / byte | Alphabet |

|---|---|---|

| Base36 | 1.55 | 0-9 a-z |

| Base62 | 1.34 | 0-9 a-z A-Z |

| Base64 | 1.33 | 0-9 a-z A-Z + 2 symbols |

| Base85 | 1.25 | 85 printable ASCII, incl. punctuation |

Base64 and Base62 are nearly tied, with Base64 winning by a hair because its math aligns on bit boundaries. But Base62 buys you an alphanumeric-only output, which is usually the reason you reached for it in the first place.

For a concrete example, here is The quick brown fox jumps over the lazy dog (43 bytes) in each:

Base36 (68): 23qhn8p9aco732ripmr6mhzfrtsmxcxxzjdmm3vgas1xzpdkz80fuvjknh7nfo0s6fdz

Base62 (58): k0YiLeAWe79bmxSBiGjowzAh4fSmcMsLmNNmsSowlyAaaWecFKMVGnsquH

Base64Url (58): VGhlIHF1aWNrIGJyb3duIGZveCBqdW1wcyBvdmVyIHRoZSBsYXp5IGRvZw

Base85 (54): <+ohcEHPu*CER),Dg-(AAoDo:C3=B4F!,CEATAo8BOr<&@=!2AA8c)

At this size Base62 happens to match Base64Url because of where the block rounding lands. On longer payloads Base64 edges ahead by a small constant factor, and Base85 stays the densest at the cost of a much noisier alphabet.

3. The Composition Layer: EncodedFormat

Finally there is EncodedFormat, which is the glue that combines the binary format and a chosen text codec into a

single StringFormat. Between those two layers is an optional transform step for arbitrary byte manipulation.

val format = EncodedFormat {

binaryFormat = PackedFormat { defaultEncoding = IntPacking.SIGNED }

transform = encryptingTransform

codec = Base62

}

val token = format.encodeToString(payload)A PayloadTransform is just a pair of encode/decode functions on a ByteArray. You get the packed bytes, return

whatever bytes you want, and the text codec runs on that. Two of them chain together with .then(...).

I mainly added this for encryption. In the Eignex case, the token rides along on the front-end between requests, so it has to be opaque. Wrapping a cipher is basically a few lines:

val encryptingTransform = object : PayloadTransform {

override fun encode(data: ByteArray): ByteArray = cipher.encrypt(data)

override fun decode(data: ByteArray): ByteArray = cipher.decrypt(data)

}The same interface covers a bunch of other useful things. Error-correcting codes (wrap Reed-Solomon this way and

you get tokens that survive a couple of mangled characters), compression for larger payloads, or a CRC checksum if

you’re worried about users truncating tokens they pasted from a log (there’s a checksum = Crc16 shorthand for

that one, though I haven’t personally needed it). There are full working

encryption

and

error-correction

examples in the repo.

PackedFormat is for dense transport, not durable storage. If you want something you can persist and evolve more

comfortably over time, swap in ProtoBuf instead.

Anyway, that’s kencode. Let me know if you find a fifth reason to pack state into a string. Source is at github.com/Eignex/kencode if you want to poke at it.

Serde was not the first to introduce compile-time serialization but it certainly popularized it. Rust doesn’t have runtime reflection in the first place, so compile-time codegen is basically the only option there. Serde itself was heavily inspired by Haskell, where typeclass-derived serialization (think Aeson’s

ToJSON/FromJSONor GHC’s genericderiving) has been the normal way to do this for ages. Pretty much all cool things in PL eventually trace back to Haskell. The mechanism in Serde is different from kotlinx.serialization (Serde uses procedural macros, kotlinx uses a full compiler plugin), but the end result is essentially the same: each type gets its own statically-typed Serializer implementation, and the format implementations just see a stream of typed calls. The coreSerializer/Deserializertraits in Serde look a lot likeKSerializerif you squint. This blog post has a good overview and the official docs are excellent as well. ↩︎Varint (LEB128) encodes an integer using only as many bytes as its value requires: values 0–127 fit in one byte, 128–16383 in two, and so on. This works well for small non-negative numbers but poorly for negatives, since -1 encodes as a large unsigned value (its two’s complement representation is all-ones, so varint needs the maximum number of bytes). ZigZag (protobuf) solves this by remapping signed integers to unsigned ones: 0→0, -1→1, 1→2, -2→3, and so on, interleaving negatives and positives so that small-magnitude values always encode compactly. The name comes from how the number line “zigzags” across zero as you count up: 0, -1, 1, -2, 2, and so on.

The actual bit trick is

(n shl 1) xor (n shr 31)for a 32-bit int (andshr 63for a 64-bit long). The arithmetic right-shift copies the sign bit across all positions, giving either all-zeros for non-negatives or all-ones for negatives. XOR-ing that with the left-shifted value flips every bit exactly when the input was negative, which is what produces the interleaving. Protobuf actually splits these into separate wire types (sint32/sint64) because the plainint32/int64types don’t ZigZag; you have to opt in. In kencode it’s controlled by@PackedType(IntPacking.SIGNED)on the field, or by changingdefaultEncodingon the format. ↩︎