Agentic Coding Has No Floor

Agentic coding is just a convention you maintain by reading every diff, the kind that decays when you're tired. So vibe coding is the failure mode you have to plan for, not a separate practice.

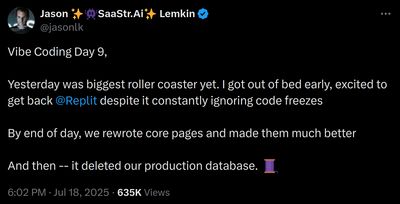

Vibe coding works for the first week or two. You describe what you want, the agent writes it, tests pass, you ship. A few weeks in, progress falls off a cliff. New prompts start breaking older features in ways that pass the obvious tests, but later surface in production.

Vibe coding is the version where you fully trust the agent, don’t read or only skim the code, and ship. Agentic coding is the version where you still read every diff, but the line between the two is a convention that decays when you’re tired, when the diff is large, or when you’re four hours in and the feature is almost done. So I’m treating vibe coding here as the failure mode of agentic coding rather than a separate thing (the disciplined version comes with its own skill atrophy issue either way).

This is structural, not behavioral. Coding agents have no equivalent of the source/generated-output boundary a compiler gives us: prompt, code, tests, and previous agent output are all editable and all treated as input. The fix has to come from the harness vendors. The missing primitive isn’t another instruction file: it’s a harness-enforced protected region the agent can read but can’t rewrite without an explicit human unlock. Until they ship it, the workarounds are unsatisfying.

It’s tempting to read this as a problem that only kicks in once you have a real team or a serious codebase, but even the vendors selling these agents are starting to see its limits. From a recent interview:

if you close your eyes, and you don’t look at the code, and you have AIs build things with shaky foundations as you add another floor, and another floor, and another floor, things start to kind of crumble.

Michael Truell, Cursor CEO

I don’t want to cast blame on the users here (“professional” SWEs doing vibe coding is another story). The dream is real: a tool that lets you build production software without the years of engineering muscle memory it usually takes. The marketing says it’s safe and the product produces plausible work. The loop stays quiet until something breaks, and the dev forums are full of stories where it did: leaked secrets, runaway agents, silent regressions.

Even if you don’t use agents, or you always read the diffs carefully, you still have to deal with the consequences. It usually arrives as a vibe-coded PR or demo from a non-technical colleague that engineering then has to finish properly. It’s hard to be the engineer who always says no, especially when these colleagues are excited to contribute and think they made something good. The question is do we want to fix it, control it, or ban it?

Why this fails

The prompt and the code are both editable, both authoritative, and the agent reads both to do work.

In contrast, a compiler is one-way and deterministic. You write Go, it produces assembly, and nobody is confused about which one to edit. If you change the Go file the assembly is regenerated; if you edit the assembly directly, you’ve done something wrong and the next compile blows the changes away.

Now imagine a compiler that’s right 95% of the time, that occasionally regenerates code in a different file you weren’t planning to touch, and that treats its own previous output as input the next time it runs. Nobody reads the assembly, because the whole point of trusting the compiler is that you don’t have to. So when it drifts, nobody catches it. The compiler keeps reading its own past output as if it were source, and errors compound silently.

If compilers worked like this, we’d stop using them. But that’s the agent loop today: prompt and code are both editable, both authoritative, and the agent has no way to tell which lines are intentional, which are placeholders, and which are artifacts of a prompt three weeks ago. It edits whatever looks reasonable, and your original constraints disappear.

Here’s what that looks like. In week one you ask the agent to add a payment flow, and it correctly puts a GDPR consent check before the charge and bounds the amount against the user’s daily cap:

if not user.has_consent("payments"):

raise PaymentDenied("missing consent")

if amount <= 0 or amount > user.daily_cap:

raise PaymentDenied("amount out of bounds")In week three you ask the agent to simplify the same function. The next time you look, it reads like this:

if amount <= 0:

raise PaymentDenied("amount out of bounds")

if amount > user.daily_cap and not user.is_premium:

raise PaymentDenied("amount out of bounds")Tests still pass. The code reads clean. The GDPR check is gone and the fraud cap now exempts premium users. Nobody asked for either change.

I’ve been calling this logic drift: a locally valid agent edit preserves the apparent shape of the code but weakens an earlier constraint. A guard moves; an invariant becomes conditional; an authorization check gets duplicated incorrectly; a test is updated to match the new behavior. Git records the textual change, but not the lost intent. The diff says a guard moved; it doesn’t say a load-bearing constraint stopped being protected, because the constraint was never encoded as something the agent was forbidden to rewrite.

This happened on the Linux kernel. A maintainer submitted an AI-generated patch that dropped a __read_mostly annotation. That’s a cacheline placement hint, and removing it causes contention on every multi-core system the kernel ships to. The line looked like trivial cleanup on review, and the patch landed. Torvalds later said he would have scrutinized it differently had he known it was AI-written. Nothing in the patch said this attribute was load-bearing, because nothing in the source did.

The shape of a fix

The fix lives in the harness: the layer between the model and your filesystem, like Cursor, Claude Code, Replit, or an IDE plugin. The smallest useful primitive isn’t “don’t edit this file.” It’s “this comment and the syntax node directly below it are human-owned.” The agent can read the region, reference it, and propose a patch, but can’t apply the patch without explicit unlock. That’s the source/assembly boundary back in the code, this time explicit at statement granularity.

Protected regions aren’t a new idea. Generated-code tools used BEGIN USER CODE / END USER CODE markers for decades because regeneration was destructive. Agentic coding has the same problem without a visible regeneration step: the generator repeatedly edits ordinary source files. The fix lives one layer up, in the harness rather than the codegen template.

Two kinds of code want locking. The first is code the agent generated under specific prompt constraints, which it shouldn’t quietly regenerate from thinner context the next time it visits the file. The second is code a human wrote deliberately and wants kept. Both end up covering the same kind of territory in practice: core business logic, security boundaries, anywhere logic drift would land you in a postmortem. The agent can’t rewrite a locked region without an explicit human unlock.

The lock needs to be explicit, mostly human-edited, and canonical to the agent so you only have to say it once. Annotations work. They sit right next to the code they constrain, they don’t run at runtime, and you can write the tooling to extract them in twenty lines. Here’s one shape:

@prompt("""gdpr art 6 - refuse charge if user.has_consent("payments") is falsefraud SLA: dont charge if amount<=0 or > user.daily_cappci needs log.info("charged", user=user.id, amount=amount) after stripe call^ compliance keeps asking abt this dont remove""")def charge_card(user, amount, idempotency_key): ...The human writes the prompt in a UI that hides the surrounding code. The @prompt decorator is itself the lock; the agent regenerates the body from it when it needs to. The prompt is the source, the body is the assembly. I don’t care about this specific syntax. The invariant is what counts: the human-written constraint sits above the generated body, and the harness treats it as authoritative and immutable.

In the sane world where you still look at the code, a # lock: comment does the same job at statement granularity, in the same shape as Python’s existing # type: or # pragma: no cover directives:

def charge_card(user, amount, idempotency_key): # lock: gdpr art 6 - refuse charge if no payment consent if not user.has_consent("payments"): raise PaymentDenied("missing consent") # lock: fraud SLA - reject amounts <=0 or above user.daily_cap if amount <= 0 or amount > user.daily_cap: raise PaymentDenied("amount out of bounds") invoice = build_invoice(user, amount, idempotency_key) metrics.timing("invoice.build", invoice.elapsed_ms) receipt = stripe.charge(invoice.token, amount) # lock: pci audit trail, compliance keeps asking, dont remove log.info("charged", user=user.id, amount=amount) return receiptEach # lock: comment locks the block or row directly below it, along with itself. An if carries its whole block; a single call locks just that line. The string is the why, and is locked along with the code, so the agent has the reason in the same place as the constraint. The lock is the same in both shapes. The human just writes more of the code in one and more of the prompt in the other.

Enforcement doesn’t need model cooperation. The harness already sees every file-write tool call. Before applying a patch, it parses the file, identifies locked spans, and rejects writes that alter those spans unless the user has explicitly unlocked them. The simplest version is line-span based. The useful version is syntax-node based, so reformatting and edits in the surrounding code don’t break the lock.

There are edge cases a real implementation has to declare its behavior for: moving a locked region across files, renaming symbols defined inside one, format-only changes, imports the locked code needs, patches that touch both locked and unlocked code, and lock conflicts during rebases. None of them are intractable.

Without harness enforcement, # lock: is just another comment the agent can ignore. No current harness ships this primitive, as far as I have found; pieces of it live in various tools, never assembled together.

What’s been tried

Discipline is the obvious answer (agentic coding is a trap): use the agent less, write more yourself, cap each generation at what you can review in a sitting. The rules work until the tool wears down the willpower required to follow them. You pull the lever and a working feature falls out, often a good one. And even if you have the discipline yourself, you can’t impose it on anyone else on the team.

Traditional software engineering process keeps human contributors in check, but it locates intent outside the agent’s working context. Written requirements live outside the code and the agent doesn’t read them. Tests, type systems, linters, module boundaries, and CODEOWNERS each enforce a different surface: behavior, types, style, visibility, and who reviews. None of them enforces “don’t silently regenerate this line.” Code review catches some drift, but reviewers are reading PRs an agent produced in two minutes that touch six files at once, and agents are good at making tests pass for the wrong reason. Secondary studies on AI in software engineering are mapping the same gap from the academic side.

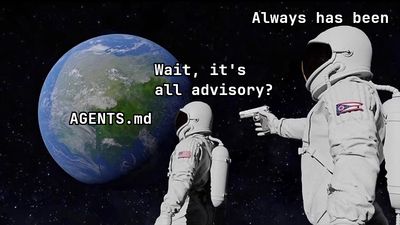

Harness hardening has caught up some, but it’s mostly advisory. Persistent memory survives sessions, skills bundle known procedures, code search has gone from grep to semantic indexing, and AGENTS.md files beg the agent not to touch certain functions. Cursor has project rules, Claude Code has hooks that can intercept tool calls, and GitHub Copilot has custom instructions. OpenCode has modes that can’t write to production files at all. I use most of these. None are a bullet-proof lock built into the agent harness; they’re either advisory rules the agent can ignore, or harness features too coarse to lock what actually matters. When one gets ignored the failure is silent.

Spec-driven development moves the lock out of the code and into a ticket written before you knew what you’d be locking. You sketch a plan, let an agent flesh it out, verify it by hand against your real constraints, then circulate it to the team. Once the spec is right, the implementation can lean on unchecked agent edits without the usual cost, because the constraints are pinned in the document instead of left in your head. Agile already taught us the problem: requirements written before code are usually wrong. The agent fills in whatever the spec missed with its own guesses, so you’ve locked yourself to a flawed plan. The harness can’t help either, since nothing checks the implementation against the spec.

Until then, micro repos

My prior on repo structure was Conway’s Law: systems end up shaped like the teams that build them anyway, so you might as well draw the repo boundaries to match the org from the start. Platform team gets a repo, payments team gets a repo, small company runs one monorepo. Going finer than that has always felt like friction without a real payoff. I’ve sat through enough cross-repo coordination meetings to want fewer boundaries, not more. The empirical case is there too, in the mirroring hypothesis and in ownership studies on Vista and Windows 7: split a repo, split the team with it.

Agentic coding has shifted my thinking on this, not flipped it. A repo wall is one boundary the agent cannot talk past. The function it would happily rewrite isn’t in its context window and the tools can’t reach it. It’s much coarser than a real locked region: there’s no way to lock just the consent check, you lock the whole service. You eat the monorepo costs to do it. Still, it works, which is more than AGENTS.md or anything else above can claim. That’s enough to make repo boundary placement worth thinking about more carefully than I used to, even if the actual answer is often still “leave it where Conway put it.” (Not enough that I’ve redrawn any at Theca, where we still trade that friction for speed.)

Micro repos aren’t intrinsically better. They’re one of the few boundaries today’s agents cannot silently cross. That makes them a containment tool, not a design ideal. Other containment mechanisms fit the same family: sparse checkouts, restricted worktrees, per-agent sandboxes, file-level write allowlists, branch protection with CODEOWNERS, and tool-call hooks that block edits outside declared paths. They sit at different levels (filesystem, version control, harness), and most teams will end up mixing them. I default to repo walls because they’re the bluntest of the lot, which makes them the hardest to misconfigure away.

Micro repos take architectural taste. The boundary is doing real work, and if you draw it in the wrong place you get the worst of both monorepo and micro repo. You need someone on the team who can spot the actual seams, and keep doing it as the system grows. With that person, micro repos give you something discipline alone can’t. Without them, the mess just moves from inside the repos to between them.

So that’s where I land. The harness vendors aren’t going to ship a real lock anytime soon. Until they do, the only boundary that reliably holds is one the agent can’t see or touch, which today mostly means smaller repos. AGENTS.md and the rest stay useful inside the boundary, but as advisory hints, not as the lock. Not elegant, but it’s what’s available.